Shadow AI in the Workplace

Shadow AI refers to the unsanctioned use of AI tools by employees without organizational oversight. This article explores the risks it poses to compliance and data protection, and provides practical steps to detect and govern its use responsibly—ensuring alignment with the GDPR, the EU AI Act, and internal policies.

AI Persona Rights: Who Owns Your Digital Clone?

AI personas are revolutionizing identity in the digital age—but at what cost? This article explores the legal, privacy, and ethical risks of cloning your voice, face, or personality. From unclear ownership rights to data misuse, learn why protecting your digital self has never been more critical.

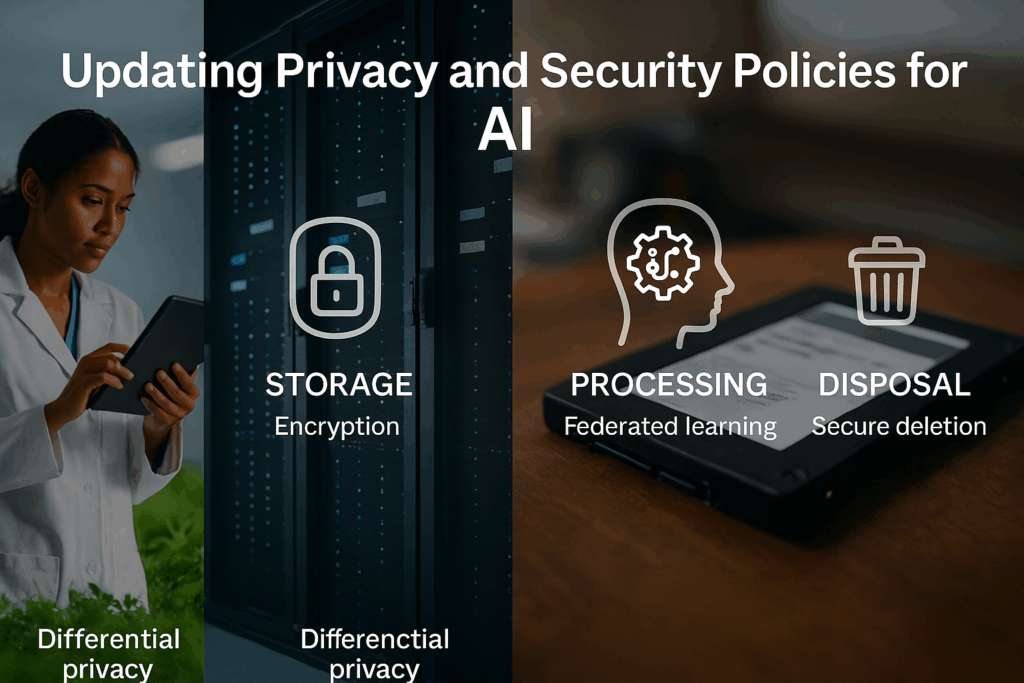

Updating Policies for AI Privacy and Security

AI introduces privacy and security risks traditional policies can’t handle. This article explains how to update your policies to address AI-specific challenges, from re-identification and model attacks to compliance with the EU AI Act and NIST guidelines.

Innovation Regulation in AI Governance

In AI governance, innovation and regulation often collide. While rapid development drives progress, regulatory frameworks demand caution, clarity, and control. This article explores the Innovation Regulation Paradox—how the push for speed can be hindered by compliance needs. It offers strategies for integrating governance into development, enabling responsible innovation without sacrificing agility. The final piece in our paradox series.

Solving the Data Paradox in AI Governance

AI systems require vast datasets to perform effectively, yet privacy laws demand minimization, purpose limitation, and short retention. This article explores how organizations can reconcile these conflicting imperatives—balancing legal compliance with technical performance—through strategic governance, privacy-preserving techniques, and proactive design principles that embed ethical data use into AI development.

The Global Local Regulatory Paradox in AI Governance

AI systems are global, but the laws that govern them are local. The Global Local Regulatory Paradox explores the compliance challenges this creates—and how organizations can build adaptive governance frameworks to manage fragmented regulatory demands across jurisdictions.

The Autonomy Accountability Paradox

As AI systems take on more decision-making power, humans remain legally and ethically responsible for their outcomes. This disconnect—known as the Autonomy Accountability Paradox—raises urgent questions about control, liability, and governance in an increasingly automated world.

Navigating the Transparency Paradox in AI Governance

The rise of artificial intelligence has introduced a wave of global regulatory frameworks, all demanding greater transparency. At the same time, companies are under increasing pressure to protect the intellectual property behind their AI models. These two forces are fundamentally at odds, creating what has become known as the Transparency Paradox. This paradox is just […]

Responsible AI Principles: Ensuring Fairness, Safety, and Transparency in AI Systems

Responsible AI principles ensure fairness, safety, transparency, and accountability in AI systems. By addressing bias, enhancing security, and maintaining human oversight, organizations can build ethical AI that aligns with societal values. Strong governance and continuous monitoring help mitigate risks, fostering trust in AI’s role in critical decision-making and daily life.